Free Course

The Software Engineer's Guide to How LLMs Work

How modern LLMs work in plain engineer English. Kill the magic-act feeling. Make architecture calls without renting a professor.

See the full curriculum (14 lessons) →

Get the full material

Free newsletter. Subscriber lesson links when chapters ship. No spam. Leave anytime.

Free. No spam. Unsubscribe anytime.

Why subscribe

Stop treating the model like a sealed appliance. Build a real mental picture of what happens inside so latency, bill size, and context limits feel like numbers you own.

For: Software engineers shipping or integrating LLMs who want ammo for design review. No grad-school homework.

- A structured map of how modern LLMs work

- Intuition for choices that hit latency, quality, and bill size

- Words you can use when sales forwards you another vendor PDF

- Full course with sequential video lessons

- Subscriber links to every chapter

- A foundation you reuse when the next model drops

- Ties mechanics to the implementation decisions you control

Subscribe free to unlock the full course and future updates.

Modern LLMs look like magic. However, as engineers, we can peer inside and understand the basic concepts on which they operate. It’s surprisingly natural how the transformer network evolved from the shortcomings of traditional seq2seq models. In this course, we will get a thorough mental model of how LLMs work, how they evolved, and what their basic functions are. This way, they stop being an opaque API and start looking simple.

Who it is for: engineers who build or integrate AI systems and want under-the-hood clarity without jumping through pedigree hoops.

Format: short video lessons with structured progression.

Curriculum

What you’ll learn

Full video and written lessons are unlocked via the newsletter. Here’s the complete syllabus. 14 lessons in structured modules.

| # | Lesson | Summary |

|---|---|---|

| 1 | Course Introduction | How this guide is organized and what you will take away before we define LLMs. |

What Are LLMs?

| # | Lesson | Summary |

|---|---|---|

| 2 | Introduction: What is an LLM? | Frames the section so that we look at language models the right way. |

| 3 | Deep Neural Networks | What "deep" means and how stacked layers build representations from raw inputs. |

| 4 | Prediction Models | The standard prediction models to which standard machine learning and deep learning was applied. |

| 5 | Classification Models | Mapping inputs to discrete categories; intuition that carries over to modern LLM heads. |

| 6 | Image Models | Convolutional and vision architectures as contrast to sequence-first language models. |

| 7 | Seq2Seq Models | Encoder–decoder structure and why it matters for translation, summarization, and precursors to transformers. |

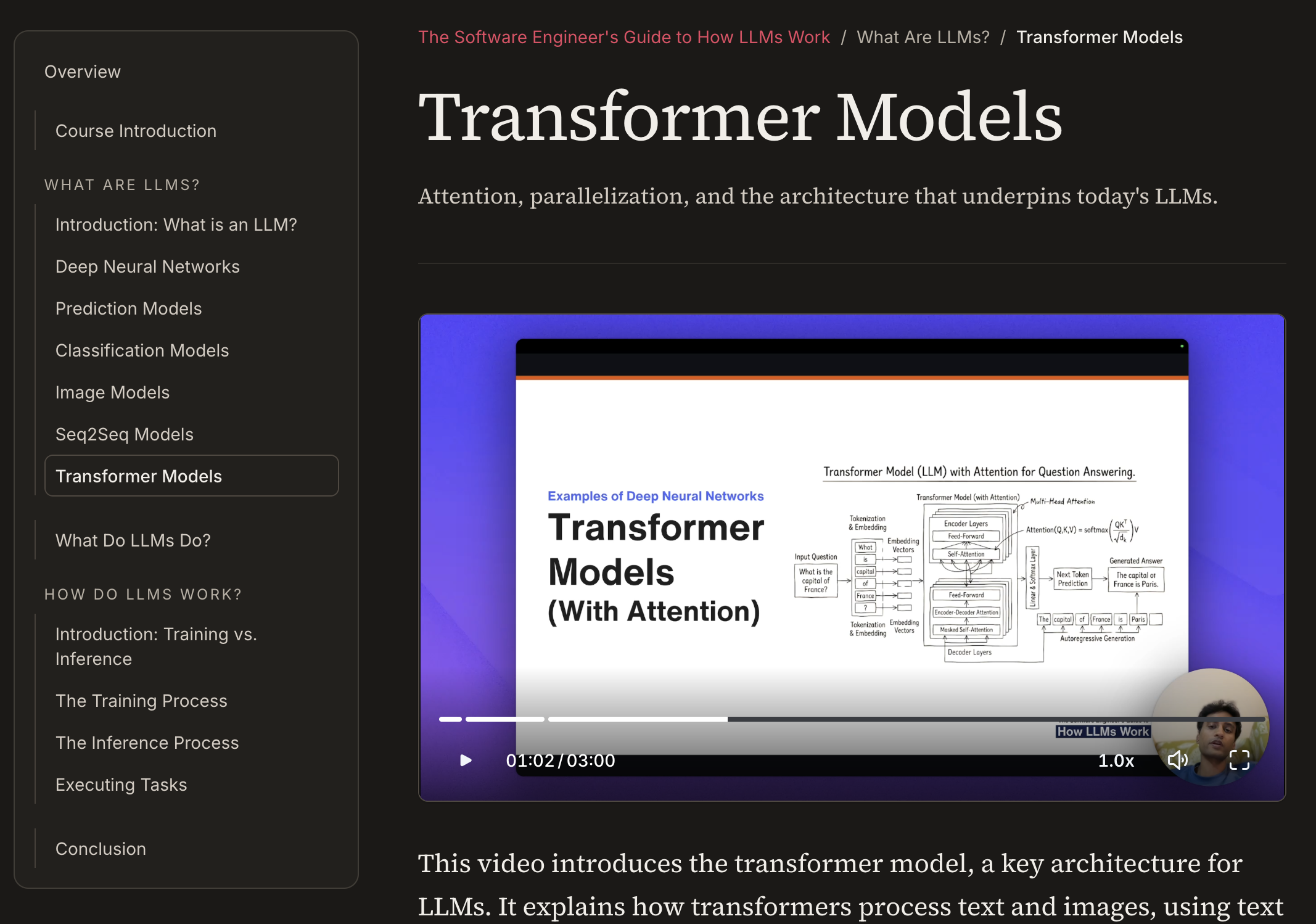

| 8 | Transformer Models | Transformer models as the next evolution of seq2seq models. |

| # | Lesson | Summary |

|---|---|---|

| 9 | What Do LLMs Do? | How and why an LLM is very similar to an autocomplete function for human languages. |

How Do LLMs Work?

| # | Lesson | Summary |

|---|---|---|

| 10 | Introduction: Training vs. Inference | Understand how training and inferences are set up to facilitate all the magical tasks that LLMs are able to do. |

| 11 | The Training Process | Understand how raw text is transformed into something that the LLM can train on. |

| 12 | The Inference Process | How LLMs output tokens, and what specific thing doesn't actually reside within an LLM but outside it. |

| 13 | Executing Tasks | The basics of how the core abstraction of next-token-prediction is used to perform complex tasks. |

| # | Lesson | Summary |

|---|---|---|

| 14 | Conclusion | Key takeaways and next steps. |