Issue #1

Enter: The Invariant

Why shipping production AI takes clear use cases and real system design. Not prompt tricks. Not a framed degree on the wall.

The Invariant is about production AI for software engineers who want the real story under the hood. Systems thinking first. Credential theater never.

In this first issue, we will talk about why LLMs are not the answer to everything. When building any system, this is how it looks to people unfamiliar with systems design.

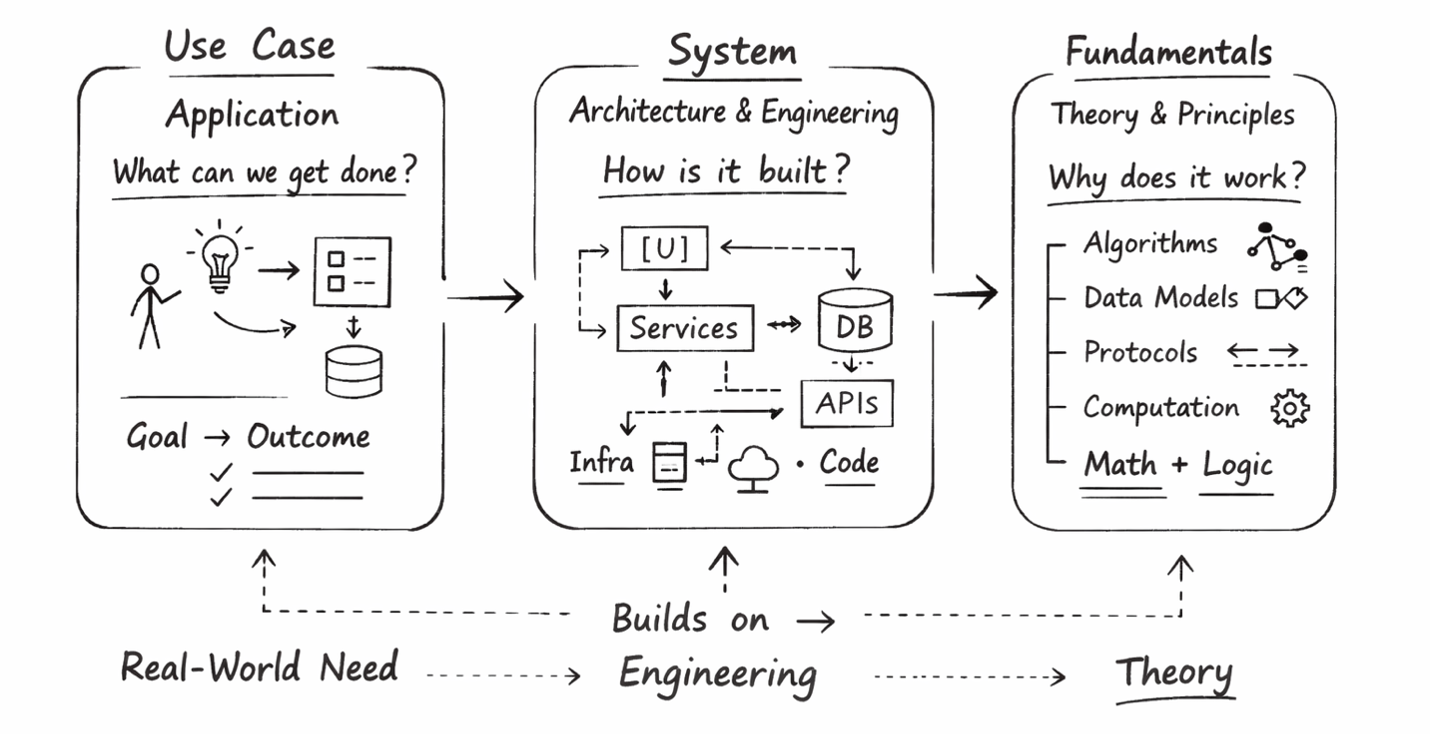

In truth, it takes 3 distinct steps to get to anything viable. And it looks something like this:

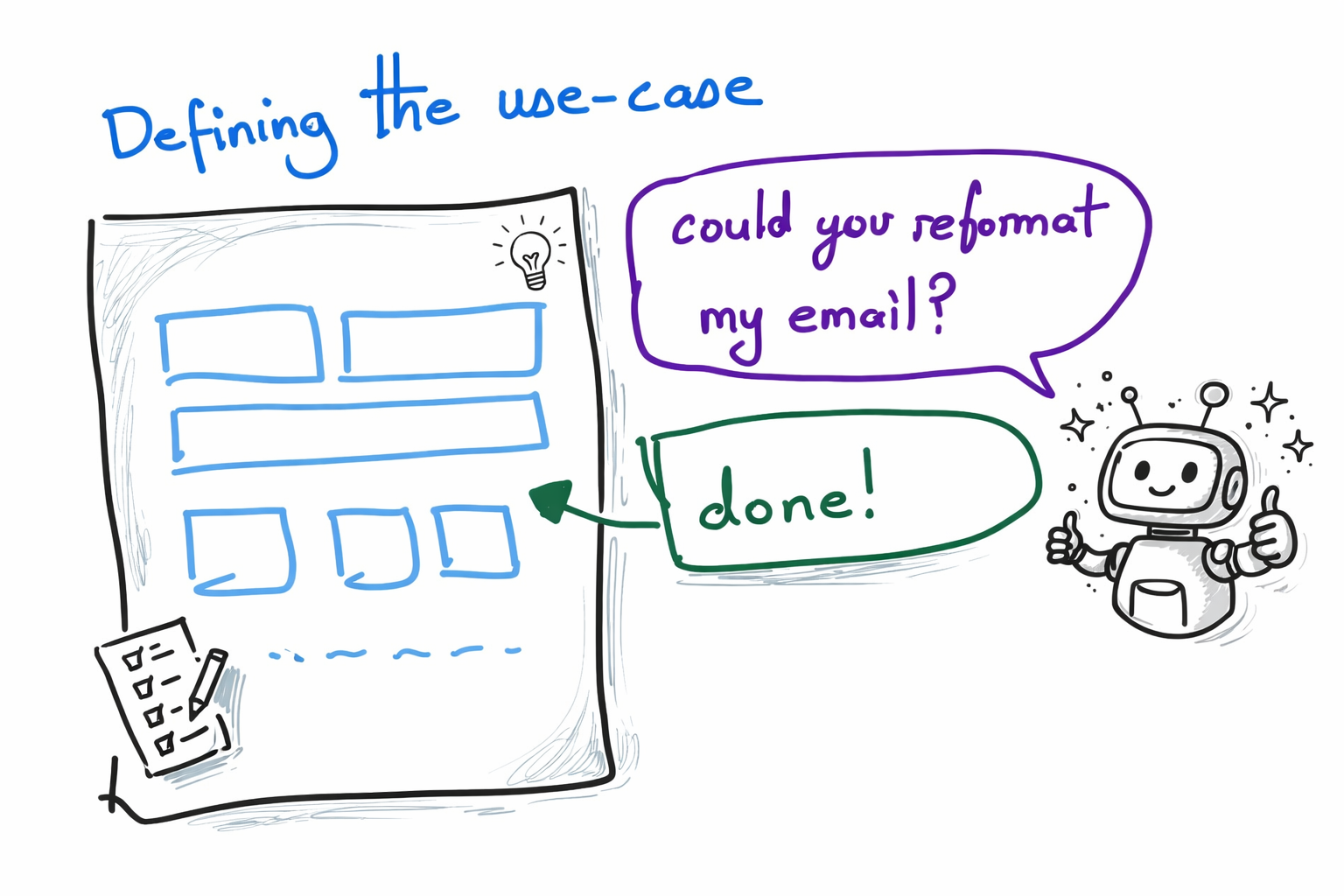

Step 1: The Real-World Use-Case

The approach is straightforward. We always begin with the real-world need first, for several reasons:

- It allows us to speak at a business level rather than just a technical level. We can discuss whether it will sell, regardless of how it is built.

- It challenges us as software engineers. Instead of choosing a technical problem, we are presented with one, and that uncertainty is truly adventurous.

- It lets us play the strategic game of software rather than just the tactical build game.

- It enables our team and us to contribute more directly to driving revenue, rather than only building a robust product.

Whenever I meet folks from the product team or others in leadership, I always tell them, “Pretend that I am a genie. Which capability do you wish to come true for you?”

They asked for better ways to build marketing segments, journeys, and HTML emails. I delivered agents that write a custom DSL, turn intent into complex graphs with marketing wisdom, and integrate deeply with a third-party drag-and-drop editor, including an anti-hallucination layer to handle quirks.

Step 1 allows us to work backward and unleash programming creativity.

Step 2: System

Software engineering is surprisingly deceptive. Typing just a few lines of Python can produce complex analyses, interfaces, or agentic systems. This can create the mistaken impression that everyone is now a software engineer.

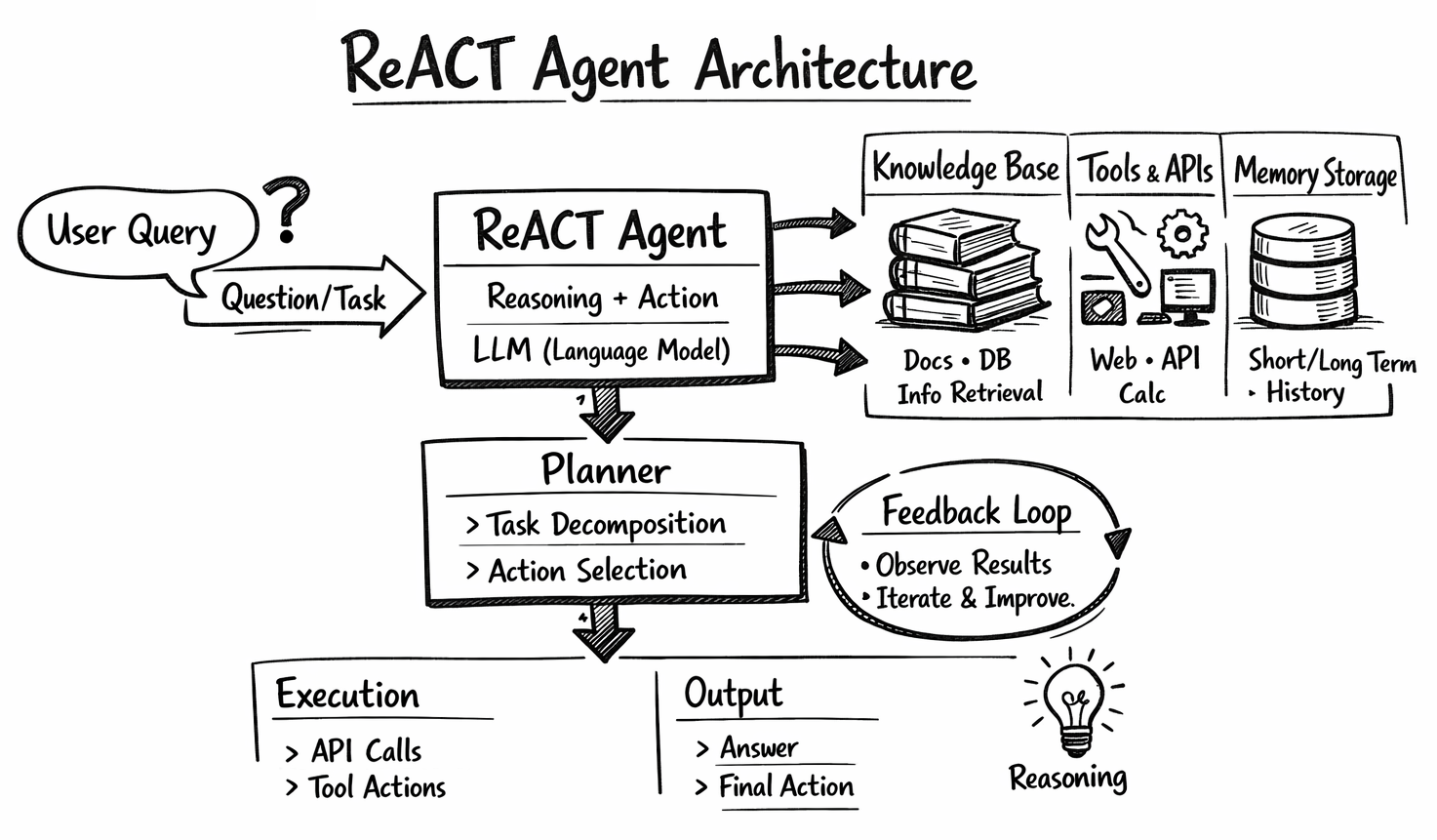

Building AI agents is not as easy as just calling an LLM and politely requesting it to do something.

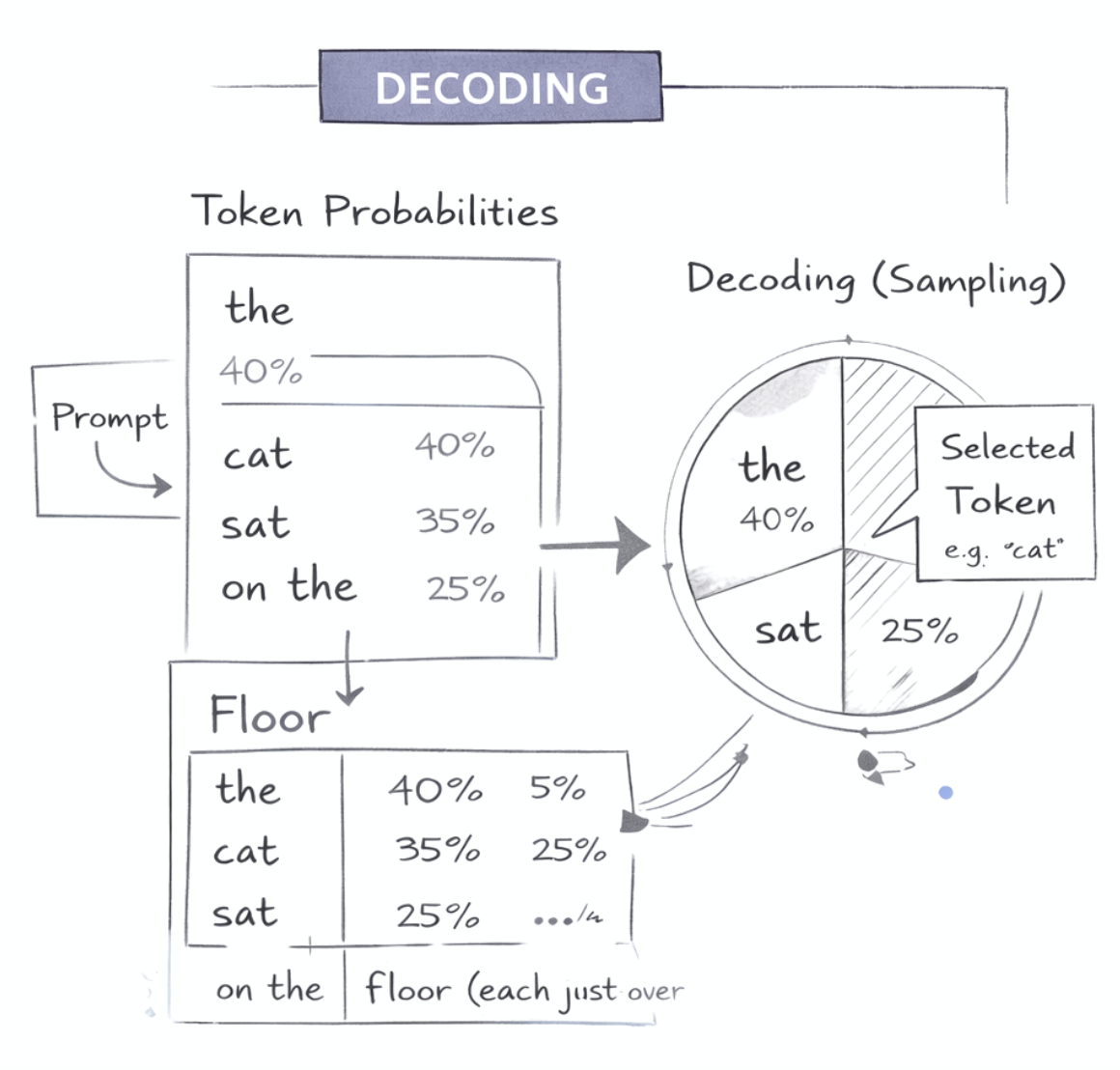

First, while LLMs accept instructions, that is just an illusion. We craft prompts to control probability distributions of outputs precisely. This is not about giving better instructions. For example, we discovered a way to make precise edits to images using traditional models without causing side-effect changes.

Second, LLMs require a robust system around them. They fail spectacularly and are mainly stochastic. Good design is no longer optional. For example, our anti-hallucination layer consists of roughly 10,000 lines of code to make sure the LLM follows instructions.

Third, issues such as cost, ROI, and scalability are increasingly important. It used to be cloud bills in board meetings. Now, it is the AI bill, especially when it is hard to connect costs to clear ROI. That is why smarter engineering is needed: to do more with less.

Step 3: Fundamentals

This is my favorite part, but without the context of a use case or a system, it is completely boring to read about.

It is about how systems work, or how LLMs operate, because understanding them is essential for innovating new use cases. We do not want to copy documentation and stay in tutorial hell forever. We want to apply principles to new situations and create innovative solutions.

This way, we not only learn how to design the current system but also understand future evolution and how design can improve as third-party capabilities develop.

So those are the three major steps, each of which is its own field. A good product needs depth, applicability, viability, and should also be fun to use. It is not enough to merely call an LLM and proclaim a product agentic.

Get the next issue in your inbox

If you want technical depth, applicability, fun, and profit all at once but have never found them together before, The Invariant is the place to be.

Subscribe today if you have not already.

Free. No spam. Unsubscribe anytime.