Issue #2

Cheap Intelligence, Expensive Judgment, And DSLs

LLMs made raw intelligence cheap. Judgment and plain system design still decide if your product earns money. Including how DSLs tighten agent-style loops.

Does learning calculus today make me smarter than Newton? Of course not.

I can learn in a weekend what took Newton years to invent. I have better notations, clearer explanations, and symbolic solvers in my browser.

In short, I have more leverage, not more genius. Access to intelligence is not the same as possessing it. And in this AI era, we are confusing access and possession.

LLMs made it easy to summon explanations, strategies, or decent content. It is tempting to think expertise itself is being commoditized. I think the opposite is true.

As intelligence gets cheaper, judgment gets more expensive.

GPS did not eliminate navigators. Photoshop did not eliminate designers. LLMs will not eliminate experts. If anything, abundant answers increase the premium on knowing which answers matter.

Which leads to a practical question: if prompting is not the final interface for expert intelligence, what comes next?

What if agents wrote DSLs instead of tool calls?

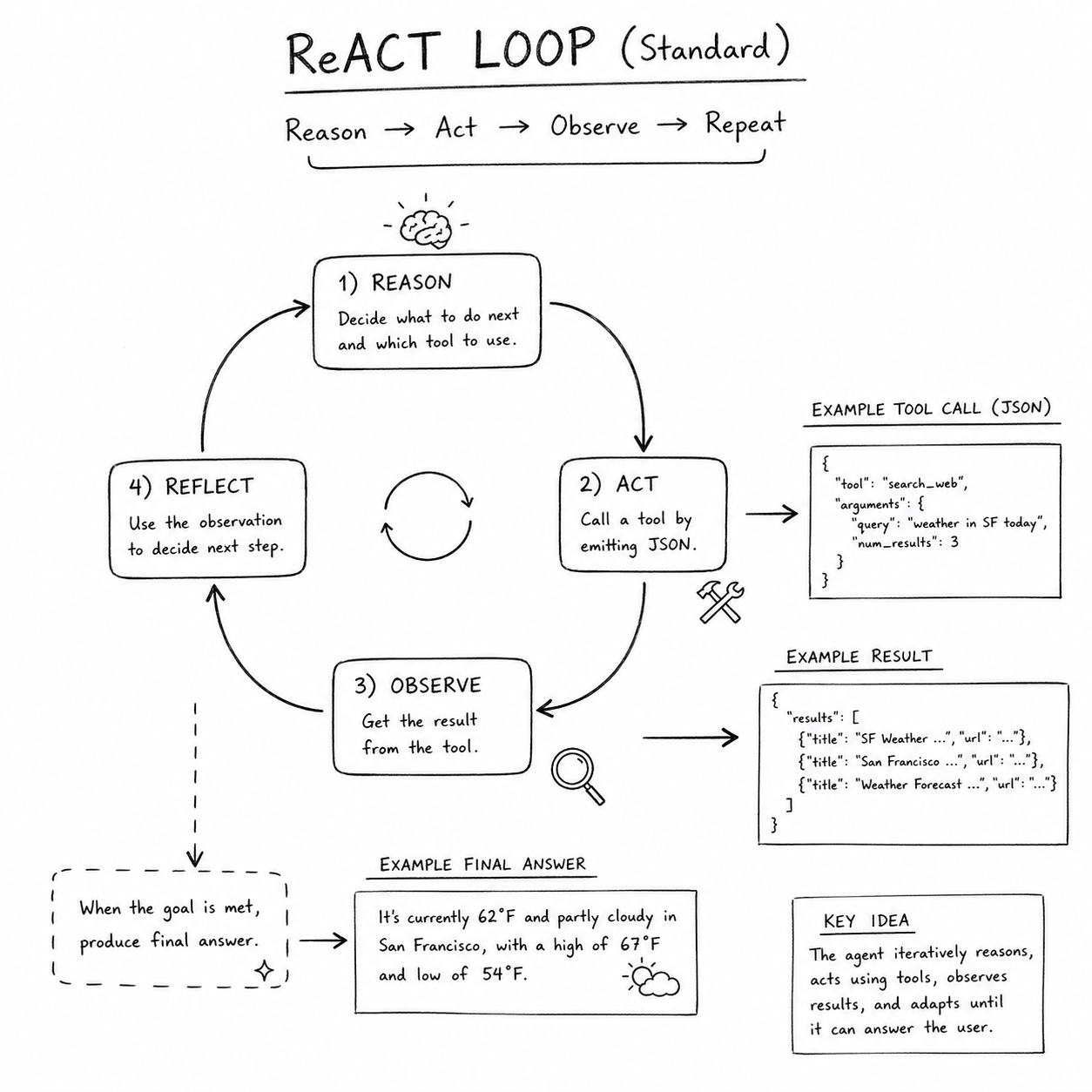

A lot of enterprise agent systems still use some flavor of ReACT:

It works, but it is brittle:

- Tool calls are usually direct invocations without explicit conditionals. Conditionals fall on model reasoning alone.

- Often the model is not really responding. It repeatedly calls tools to gather data, especially when later calls depend on IDs from earlier calls.

- That means more turns, more tokens, and more latency. More turns also increase hallucination and drift risk, and reduce determinism.

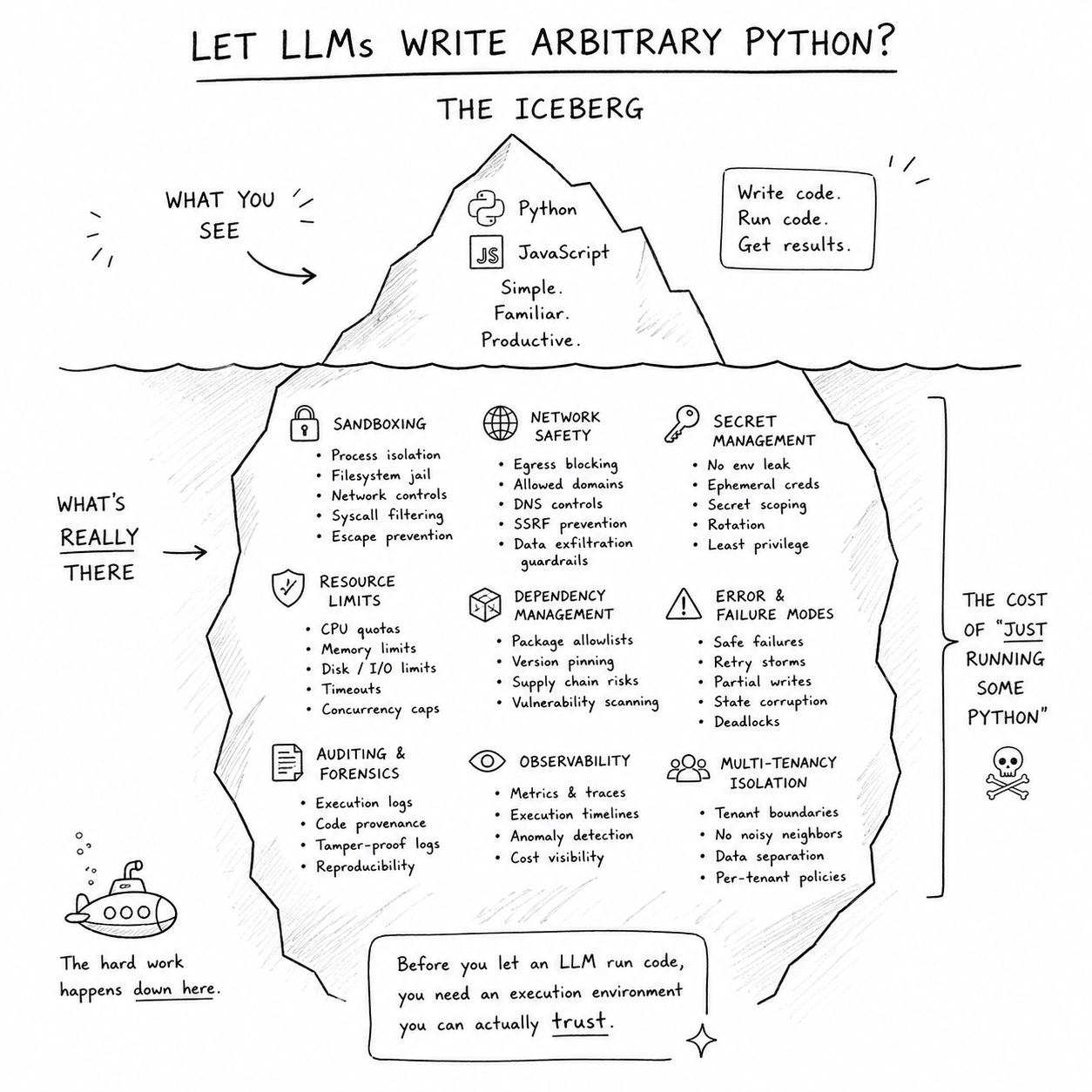

Foundation model companies solve many of these limits by executing JavaScript or Python directly. But that is not easy for every startup:

- It requires serious security sandbox work.

- It imposes a tax on generality most teams do not need for narrow use-cases.

- Open-ended execution is harder to observe and debug.

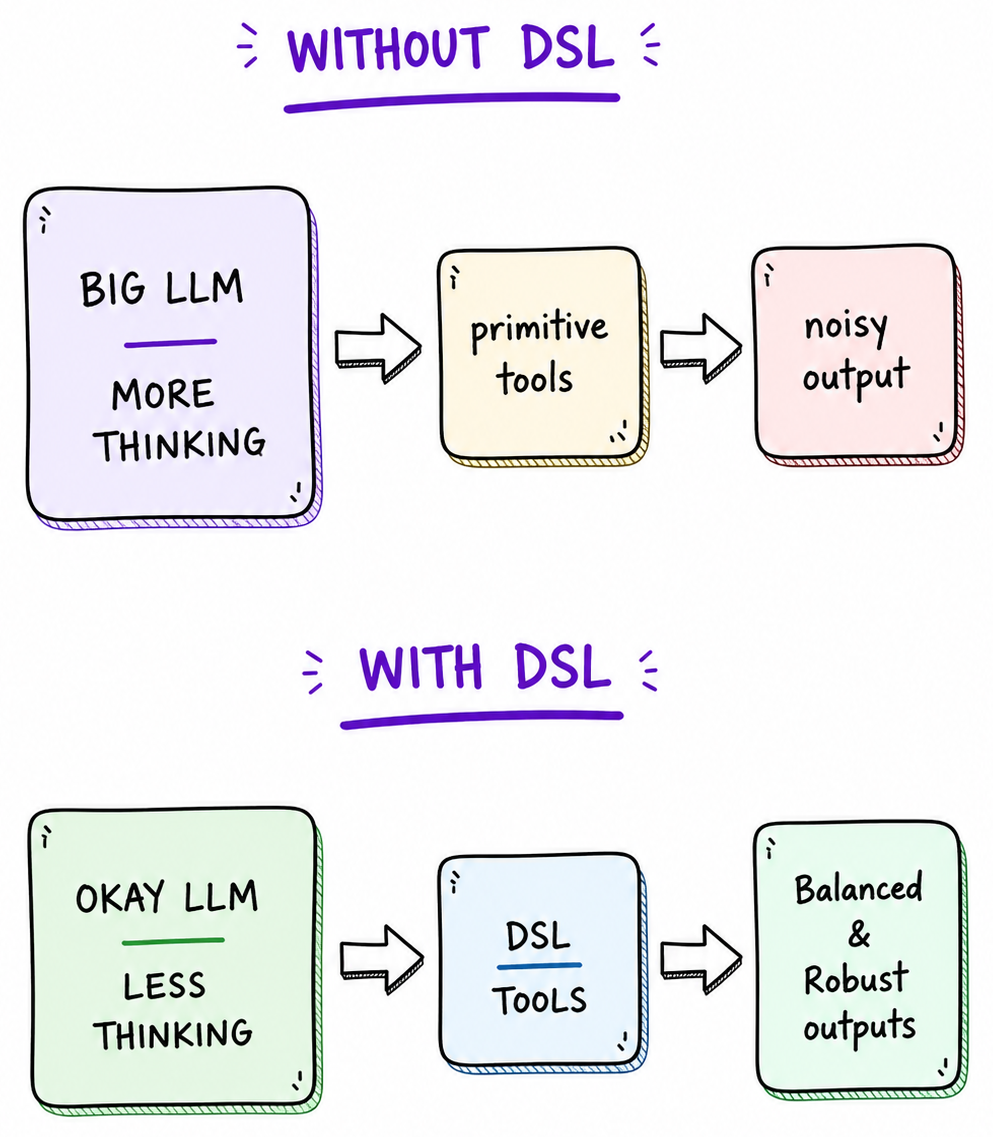

So the question becomes: what if we use DSLs instead?

Why DSLs?

Domain-specific languages are still underused for three reasons:

- Writing a parser and interpreter that are robust, debuggable, and evolvable is not trivial.

- Designing a good DSL is a different skill from implementing one. It demands deep domain understanding and production awareness.

- Practical support was historically hard to find. Even now, generic answers are common, but practical patterns are rare.

Still, DSLs are powerful:

- They express domain intent concisely. Boilerplate hides behind primitives.

- They act as natural guardrails. The model can only generate valid domain actions.

- They compose complex workflows while reducing how much low-level domain reasoning the model must do at runtime.

Design the language like a product

The trick is designing primitives that compose cleanly into real use-cases.

Let us look at a practical implementation sketch without too much code:

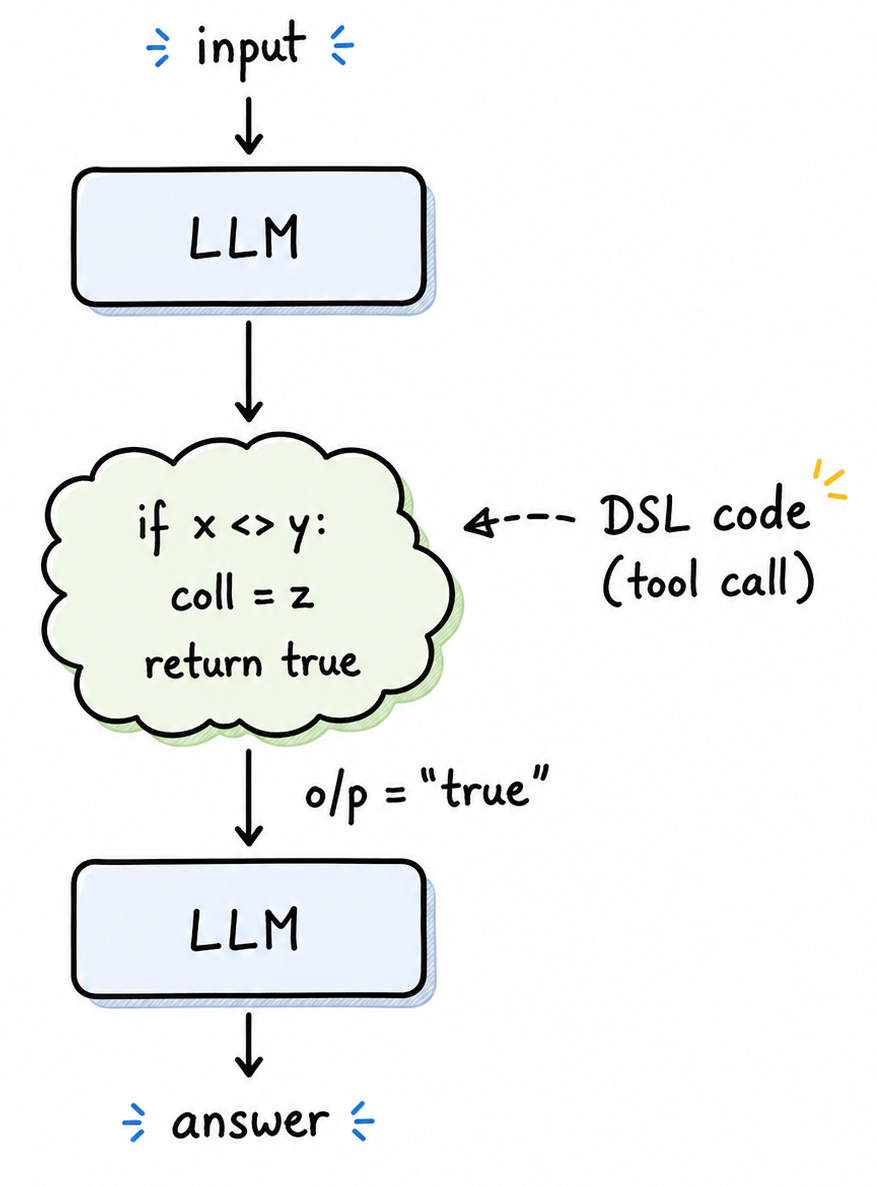

To implement this well, we need three parts: teach the model the language, parse it, and interpret it.

After language design, teaching usually starts with examples the model can mimic:

# Example 1: classify then branch

WHEN ticket.priority == "high" THEN

notify(team: "oncall")

fetch(customer_context)

ELSE

queue("support_backlog")

# Example 2: retrieve and summarize with guardrails

FETCH posts FROM "hackernews" LIMIT 20

FILTER posts WHERE score > 100

SUMMARIZE posts STYLE "exec-brief" MAX_TOKENS 220

# Example 3: conditional tool sequence

FETCH invoice(id: "INV-4021")

IF invoice.amount > 10000 THEN

request_approval(role: "finance_manager")

ELSE

send_payment()I prefer compound examples where multiple techniques appear together. That helps mimicry. If examples grow large, a retrieval layer can surface the best examples for the current context.

The second part is parsing. You can start with recursive descent, but Python teams can move fast with lark:

from lark import Lark

grammar = r"""

start: stmt+

stmt: "FETCH" NAME "FROM" ESCAPED_STRING ("LIMIT" NUMBER)?

| "IF" cond "THEN" stmt+ "ELSE" stmt+

cond: NAME ">" NUMBER

%import common.CNAME -> NAME

%import common.ESCAPED_STRING

%import common.NUMBER

%import common.WS

%ignore WS

"""

parser = Lark(grammar, start="start")

tree = parser.parse('FETCH posts FROM "hackernews" LIMIT 20')The final part is interpretation, where you execute expressions while maintaining state. Even a small interpreter can support meaningful conditionals:

def run(program, state):

for node in program:

if node["op"] == "fetch":

state[node["name"]] = fetch(node["source"], node.get("limit"))

elif node["op"] == "if":

branch = node["then"] if eval_cond(node["cond"], state) else node["else"]

run(branch, state)

return stateBuilding your own interpreter also helps with resource management. You can track script energy usage and stop execution when limits are exceeded. That protects against runaway loops and expensive plans.

Three rabbit holes people seemed to like

A few related ideas on thinking and engineering took off recently:

- On thinking and programming [Steve Jobs]

- Computers vs. submarines [Dijkstra]

- Pablo Picasso on computers

One thought I am leaving with

Maybe the next breakthrough is not a larger model. Maybe it is a better language for thoughts and actions.

See you in Issue 3.

Get the next issue in your inbox

If you want technical depth, applicability, fun, and profit all at once but have never found them together before, The Invariant is the place to be.

Subscribe today if you have not already.

Free. No spam. Unsubscribe anytime.